'The Validation Gap'

/

Part

5

of

5

Over the course of this series, we have examined why PCB prototype failures remain stubbornly persistent: the spec compatibility errors that manual review misses at predictable rates, the behavioral verification that simulation tools can perform but real project constraints prevent, the economic burden that accumulates when these gaps compound across projects and years. The diagnosis is clear. The question that remains is different, and harder: what would it actually take to close the validation gap? Not incrementally, but structurally.

Answering that question requires an honest look at what exists today, an examination of how another engineering discipline solved an analogous problem, and a concrete description of what integrated hardware validation would need to accomplish. It also requires acknowledging that the forces converging on this problem are making the status quo increasingly untenable.

The tools and processes that the PCB design industry relies on are not broken. They are incomplete.

Design Rule Checking catches real errors: unconnected nets, clearance violations, trace width issues, and physical constraints that would prevent reliable manufacturing. Modern DRC implementations are fast, thorough, and well integrated into the design workflow. Design for Manufacturability reviews have become increasingly automated and comprehensive. These are genuine achievements.

Simulation platforms represent another. SPICE-based circuit simulation, first developed at UC Berkeley in 1973 [1], has evolved into a family of tools capable of analyzing everything from basic DC operating points to transient behavior in complex mixed-signal systems. Signal integrity and power integrity analysis tools can model transmission line effects, crosstalk, and power distribution across entire boards.

Peer review, while manual, provides value that automated tools do not. A fresh pair of eyes catches things the original designer's familiarity blindness obscures. Many teams have developed structured review checklists and formal design review gates that measurably improve quality.

The problem is what falls between these capabilities. DRC validates physical correctness but has no visibility into functional correctness — whether a 3.3V sensor can tolerate 5V on its input, whether UART transmit connects to the other device's receive, whether a voltage divider maintains its required output across component tolerances. Simulation can verify behavior, but as explored in Article 2, only for the handful of subcircuits an engineer has time to analyze, and only when the analysis is configured correctly. Peer review catches what reviewers happen to notice, subject to the cognitive constraints that produce miss rates of 20-30% on complex inspection tasks [2].

What remains unaddressed is systematic verification that bridges these capabilities: automated confirmation that component specifications are compatible across the full design, and that circuit behavior meets design intent across the full range of operating conditions. This is the compound gap at the intersection of spec compatibility and behavioral verification that neither DRC, sporadic simulation, nor manual review reliably covers.

The gap has persisted not because the industry lacks awareness but because closing it requires solving a hard problem. Checking more rules or running more simulations is not sufficient. The challenge is knowing what to check and what to simulate across a design of arbitrary complexity, without requiring the engineer to specify every question manually.

Earlier in this series, we drew a brief parallel between hardware validation and software quality assurance: DRC as the syntax checker, manual review as the only test suite, no equivalent of the CI/CD pipeline that systematically verifies whether the design does what it is supposed to do [3]. That comparison deserves a closer examination, because the specifics of how software solved this problem contain practical lessons for hardware, and the transformation happened within living memory.

In the 1990s, software quality assurance looked remarkably similar to where hardware validation stands today. Developers wrote code, ran it through a compiler that checked syntax but not logic, and relied on manual testing and code review to catch bugs. The error detection rate depended entirely on the time, attention, and expertise of the humans doing the reviewing, with all the same constraints that hardware teams face.

The transformation happened in stages, each building on the last. Unit testing frameworks emerged in the late 1990s and early 2000s, tools like JUnit that allowed developers to write automated tests for individual functions and modules [4]. The hardware analog: automated verification of individual component interfaces and subcircuits. Unit tests did not replace code review, but they caught entire categories of errors automatically, consistently, and early.

Continuous integration followed in the mid-2000s, formalized by Martin Fowler and adopted rapidly across the industry [5]. Every code commit triggered an automated rebuild and full test suite execution, catching integration errors within minutes rather than weeks. The hardware parallel: a system that automatically re-verifies design correctness whenever the schematic changes, catching interaction errors between components that individual checks might miss.

Static analysis added another layer, examining code without executing it to identify potential bugs, security vulnerabilities, and structural problems. Static analyzers found categories of errors that were difficult to test for dynamically: null pointer dereferences, resource leaks, race conditions. The hardware analog: analyzing a schematic to identify potential functional issues — voltage incompatibilities or missing pull-up resistors — without requiring full simulation.

Most recently, AI-assisted quality assurance has begun augmenting these automated pipelines. According to Gartner's 2024 Market Guide for AI-Augmented Software-Testing Tools, 80% of enterprises are projected to integrate AI-augmented testing tools into their software engineering toolchains by 2027, up from approximately 15% in early 2023 [6]. Machine learning now prioritizes test cases, predicts likely failure points, and generates test scenarios that human testers would not think to create.

The cumulative result is striking. Modern software organizations routinely deploy code changes to production multiple times per day with confidence — not because their developers make fewer mistakes, but because their validation infrastructure catches errors systematically and early. No serious software organization ships a product verified only by a compiler and human code review. The automated testing pipeline is infrastructure, not optional.

The hardware analogy is imperfect. Software can be recompiled and redeployed in minutes; a PCB respin takes weeks and costs tens of thousands of dollars. Software tests can be run in isolation; hardware behavior depends on physical interactions that are harder to model. But these differences make the case for automated validation stronger in hardware, not weaker. When the cost of an escaped defect is orders of magnitude higher, the economic argument for catching it earlier becomes overwhelming.

The deeper lesson from software is not about any specific tool or technique. It is about a shift in expectations. Two decades ago, the software industry accepted that bugs would be found in production, and developers were judged by how quickly they could fix them. Today, the expectation is that most defects will be caught before release, and the quality of an organization's testing infrastructure is as important as the quality of its developers. Hardware has not yet made that shift, but the forces that drove it in software are now bearing down on hardware with increasing urgency.

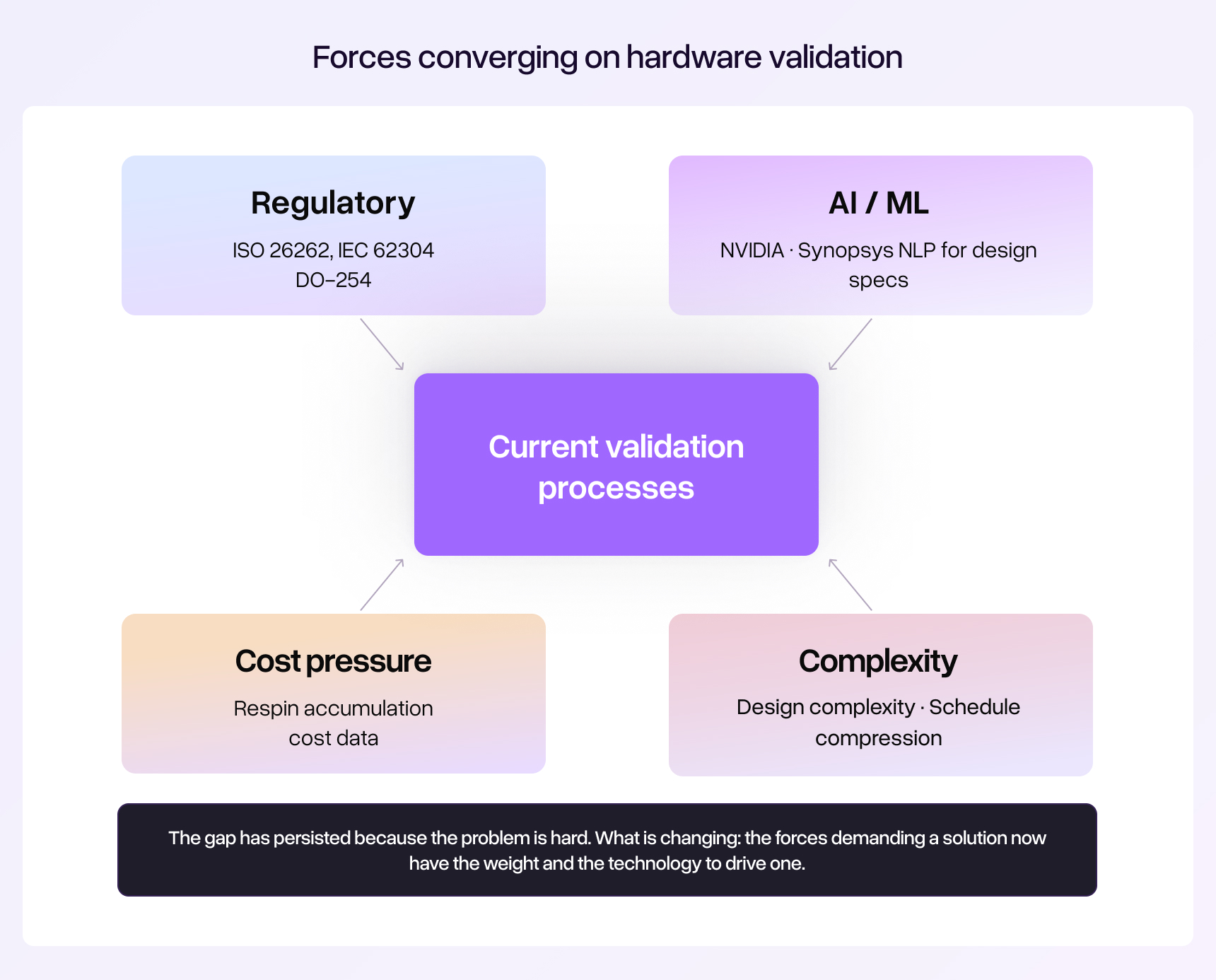

The validation gap described in this series is not new. What is new is that several converging forces are making it increasingly untenable.

Design complexity and schedule pressure have been discussed at length in earlier articles: modern PCBs incorporate hundreds of components across multiple voltage domains and communication interfaces [7], while development cycles continue to compress the window for thorough review. These trends are accelerating, not stabilizing. But two additional forces deserve attention because they are changing the terms of the problem rather than simply intensifying it.

Regulatory requirements are formalizing what was previously optional. In safety-critical industries, the expectation for design verification rigor is being written into standards with legal force. ISO 26262 for automotive systems requires systematic hardware verification activities scaled to the safety integrity level of the application [8]. IEC 62304 imposes lifecycle process requirements for medical device development [9]. Aerospace applications are governed by DO-254, which mandates documented verification of hardware design correctness. These standards do not recommend good practices; they require evidence that verification has been performed systematically. Organizations serving these markets are finding that manual review documentation alone is increasingly difficult to defend in audits. And as these standards propagate, they raise expectations across adjacent industries.

AI and machine learning are making new approaches feasible. The application of AI to electronic design automation has moved from research curiosity to strategic priority. In December 2025, NVIDIA invested $2 billion in Synopsys as part of an expanded partnership to integrate accelerated computing and agentic AI capabilities across the EDA stack, with the explicit goal of enabling 'R&D teams to design, simulate and verify intelligent products with greater precision, speed and at lower cost' [10]. This follows years of investment by EDA vendors in ML-driven verification: Siemens EDA's Solido Design Environment uses machine learning to achieve SPICE-accurate coverage at a fraction of traditional simulation effort [11], while natural language processing is being applied to translate design specifications into formal verification assertions [12].

Meanwhile, the economic pressure continues to sharpen. The $500,000 to $1 million that a mid-sized team accumulates annually in preventable respin costs — documented in Article 4 — is drawing executive scrutiny in an era of supply chain volatility and margin compression. Engineering leaders are being asked to demonstrate not just that their teams perform design reviews, but that those reviews demonstrably reduce error rates.

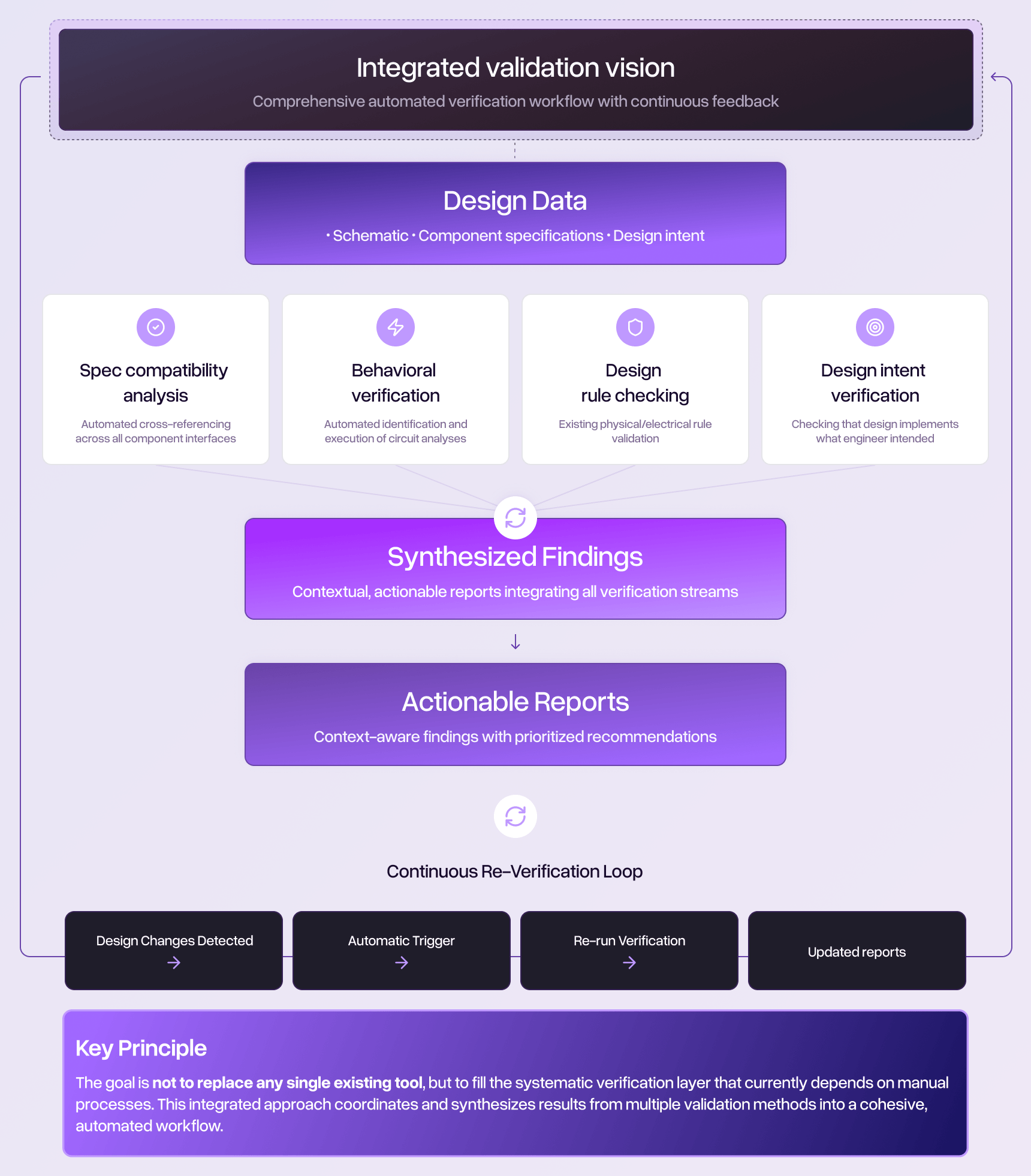

Given these forces, what would a comprehensive approach to hardware validation require? Not as a product pitch, but as an engineering specification for what the industry needs.

Automatic identification of what needs to be verified. The most fundamental limitation of current approaches is that they require the engineer to specify what to check. DRC checks a predefined set of physical rules. Simulation analyzes the specific circuits the engineer configures. Peer review covers whatever the reviewer happens to examine. An integrated system would analyze the design itself to determine what questions need to be asked: which interfaces have voltage compatibility requirements, which circuits have operating points that could be marginal, which component ratings need verification against actual operating conditions. The system would identify the verification agenda, not just execute it.

Systematic spec compatibility checking at scale. Cross-referencing component specifications across a complex design involves checking thousands of parameter combinations: input voltage ranges against output voltage ranges, logic level thresholds against driver output levels, current consumption against supply capability, timing requirements against clock characteristics. This work is currently manual, tedious, and performed with the error rates that characterize complex human inspection tasks. Automating it requires not just rule-checking, but understanding the functional relationships between components and the conditions under which their specifications must be compatible.

Behavioral verification that scales with design complexity. Simulation tools exist but are manually intensive to configure and practically limited to spot-checking a fraction of the circuits that could benefit from analysis. Integrated behavioral verification would automatically identify circuits requiring analysis, configure appropriate simulations based on component specifications and operating conditions, execute those analyses across the full design, and compile results that map findings to design intent. Systematic coverage rather than selective spot-checking.

Results compilation mapped to design intent. Raw simulation data and specification comparison tables are not, by themselves, useful to a busy engineer. Integrated validation would synthesize findings into actionable reports that explain not just what was found, but why it matters: 'This voltage divider produces an output of 2.95V to 3.05V across the tolerance range of its resistors, but the downstream ADC reference input requires 2.99V to 3.01V. The design is marginal under worst-case component tolerances.' This kind of contextual reporting requires understanding the purpose of the circuit, not just its topology.

Continuous verification as designs evolve. Hardware designs go through many iterations. Components are substituted. Pin assignments change. Power domains are reorganized. Each change can introduce new errors or invalidate previous verification results. Integrated validation would operate continuously, re-checking affected portions of the design after each modification, much as a CI/CD pipeline re-runs relevant tests after each code commit. The shift-left verification concept already gaining traction in the PCB design community [3] is a step in this direction. The natural extension is verification that runs not just early, but continuously throughout the design lifecycle.

The integrated validation described above requires more than better rule engines or faster simulators. It requires engineering judgment: the ability to look at a design and understand not just what it is, but what it is trying to do, and whether it will succeed.

Understanding design intent, not just design data. A voltage divider is a circuit that produces a specific voltage for a specific purpose: feeding a reference input, biasing a transistor, setting a threshold. Intelligent validation evaluates whether the implementation achieves that purpose across all relevant conditions, not just whether the individual components meet their ratings.

Identifying questions the engineer did not know to ask. Every experienced engineer has a story about a failure mode they did not anticipate: a thermal problem they did not think to check, a tolerance stack they did not realize was marginal. The circuits that fail are disproportionately the ones that did not get analyzed, because the engineer did not perceive the risk. A system that identifies potential issues the engineer has not explicitly asked about addresses one of the most fundamental limitations of current validation — the question-framing problem described in Article 2.

Learning from failure patterns. The electronics industry collectively encounters the same categories of design errors repeatedly. Voltage level mismatches between 3.3V and 5V domains. Capacitors used near their rated voltage without adequate derating. UART TX/RX swaps. Active-low enable signals connected to active-high outputs. These patterns are well-known but consistently escape manual review because each instance looks slightly different in context. An intelligent system recognizes these patterns across designs of arbitrary complexity, applying lessons learned from thousands of previous failures to each new design it examines.

Synthesizing insights across the full design context. Current tools analyze components and subcircuits in isolation, but many design errors arise from interactions between subsystems: a power supply that is adequate for the nominal load but insufficient when all peripherals are active simultaneously, or a signal that is correctly implemented in one section of the schematic but incompatible with the component it connects to three pages away. Intelligent validation maintains awareness of the full design context, evaluating local decisions against global constraints.

The technology to support these capabilities exists and is advancing rapidly. In the IC domain, AI-driven verification already uses machine learning models to achieve SPICE-accurate coverage at a fraction of the simulation effort [11]. Natural language processing translates design specifications into formal verification assertions [12]. Foundation models trained on circuit representations demonstrate the ability to understand design structure and predict behavior. The trajectory from IC to PCB validation is not a question of whether but when, as the underlying approaches are directly transferable.

The fully integrated, AI-assisted validation pipeline described above is not a turnkey reality today. But that does not mean engineering organizations should wait. The teams that benefit most from emerging validation capabilities will be the ones that have already built the foundations: organizations that understand their respin patterns, have formalized their verification processes, and can measure improvement when better tools become available.

Formalize your verification coverage. Most teams perform some form of design review, but few systematically track what gets checked and what does not. An explicit verification matrix that maps critical interfaces, voltage domains, and component ratings to specific review steps makes gaps visible. You cannot close gaps you have not identified.

Separate spec verification from design review. Design reviews tend to conflate architectural assessment with detailed spec checking, and the spec checking suffers because it is the less engaging task. Treating spec compatibility verification as a distinct activity — with its own checklist and dedicated time allocation — improves thoroughness and creates a clear baseline for measuring what automated tools eventually provide.

Prioritize simulation coverage strategically. If comprehensive simulation is not practical under project constraints, at minimum identify the circuits with the highest consequence of failure and the highest uncertainty, and ensure those receive analysis. A thermal analysis of the highest-power regulator and a tolerance analysis of the most critical voltage reference provide more value than trying to simulate everything superficially.

Track your respin root causes. Organizations that systematically categorize the errors that cause their respins quickly discover patterns — and those patterns inform where to invest verification effort. If 40% of your respins stem from voltage compatibility issues, that is where your review process needs reinforcement, and where emerging automated tools will deliver the most immediate value.

The PCB design industry sits at an inflection point. The tools and processes that were adequate for simpler designs, longer development cycles, and less regulated markets are reaching their structural limits. The software industry faced the same inflection point twenty years ago and responded by building automated quality assurance into the fabric of the development process. The result was not the elimination of all bugs, but a dramatic reduction in escaped defects and a fundamental shift in what 'ready to release' meant.

Hardware is overdue for the same transformation. The technology to support it is no longer theoretical. The economic case is arithmetic. The regulatory pressure is real. The question is not whether integrated, intelligent validation will become standard practice in hardware development, but whether your organization will be among those that gain the competitive advantage of fewer respins, faster time-to-market, and higher first-spin success rates — or among those that continue absorbing the cost of a validation gap the industry now has the means to close.

The tools are catching up to the problem. The question is whether the industry will catch up to the tools.

Cadstrom can help you catch issues before they become costly problems.

See how Cadstrom can help you get hardware right the first time.